Semantic-aware Food Visual Recognition

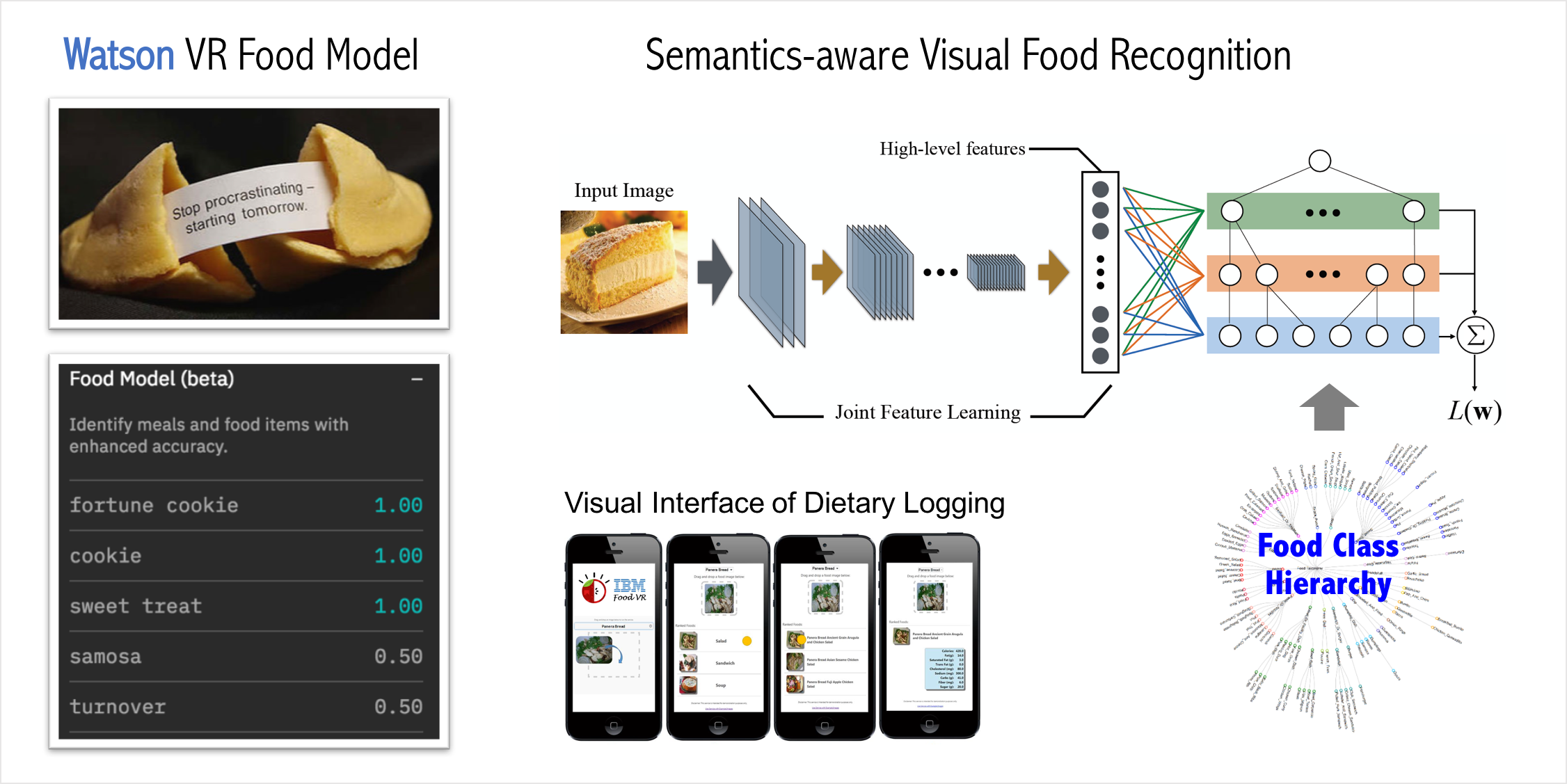

The growing popularity of fitness applications and people’s need for easy logging of calorie consumption on mobile devices has made accurate food visual recognition increasingly desireable. In this project, we proposed a visual food recognition framework that integrates the semantic relationships among fine-grained food classes.

Our framework learns semantics-aware features by formulating a multi-task loss function on top of a convolutional neural network (CNN) architecture [1,2]. It then refines the CNN predictions using a random walk based smoothing procedure, which further exploits the rich semantic information. A close variant of this basic idea was integrated to Watson Visual Recogntion API. Just for fun, here is what the model says about blueberry muffins and puppies.

Puppy or muffin? -- Check out Watson's answer on it!

— Hui Wu (@HuiWu_) May 31, 2017

🤜🤛 @JohnRSmithMM @IBMWatson @IBMResearch @CMichaelGibson #food #ai #machinelearning pic.twitter.com/ZIfhAfSrh8

References

[1] Wu, Hui, Merler, Michele, et al. “Learning to make better mistakes: Semantics-aware visual food recognition.” ACM Multimedia, 2016

[2] Merler, Michele, Wu, Hui, et al. “Snap, Eat, RepEat: a food recognition engine for dietary logging.” Proceedings of the 2nd International Workshop on Multimedia Assisted Dietary Management. ACM, 2016